Goodbye MongoDB

Published on 2023-05-27 by Stuart SpenceAfter years of strange problems and bad performance with MongoDB, this week I successfully migrated chesscraft.ca to PostgreSQL. This took lots of preparations, went smoothly, and is performing great, so I thought I'd write about it. Writing here is cathartic. Also, others might find it useful.

Performance

Look what happened after I replaced Mongo with PostgreSQL on 2023-05-23.

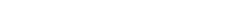

I may now be able to downgrade my instance and save 5$ a month with the 30% memory use reduction. And this graph isn't even showing the wild spikes that happened randomly (not in response to any traffic spike):

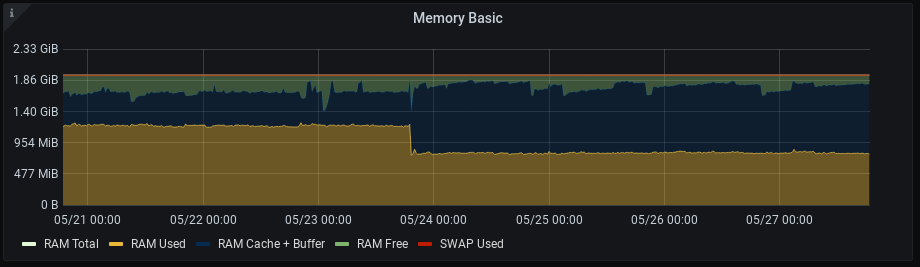

API calls generally take 8 ms now, not 150 ms. Note how the control case /multiplayer stayed constant, because those use redis and not Mongo or PostgreSQL.

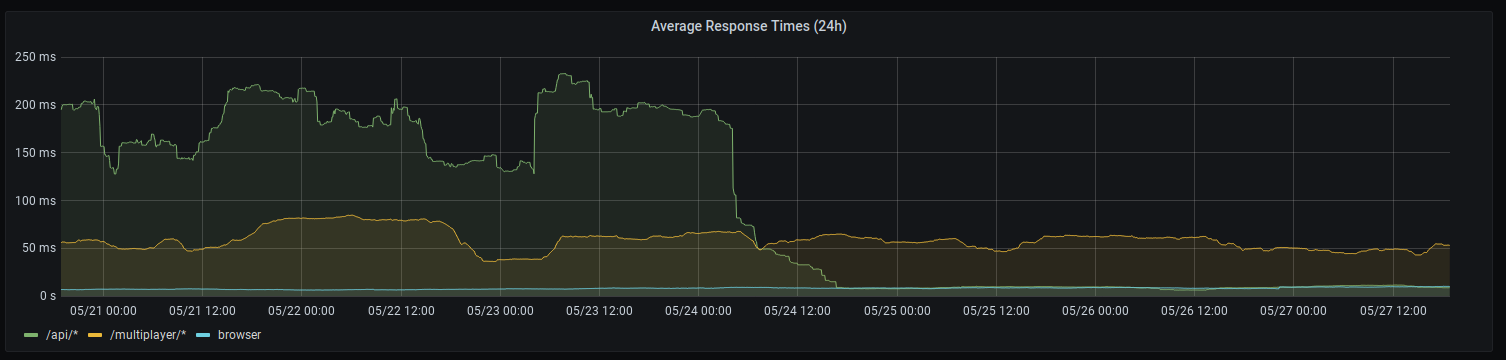

Hard working little CPU finally gets to work 80% less:

I'm trying to stay humble. Mongo must be an incredibly big project with lots of nuance. I'm just a solo developer and absolutely not a database engineer. I've also never had the opportunity to work closely with a good database engineer. However I shouldn't be seeing improvements like this with default out of the box PostgreSQL compared to all the things I tried over the years to fix and tune Mongo.

What is Mongo

Mongo is a way to store data (database) which stores everything as a JSON document. Many other database technologies use SQL, where data is stored in tables which is more like how spreadsheet software uses columns and rows.

Why Mongo

I like JSON. They're comfortably built into Python and JavaScript, and JSON schemas are useful. In 2019, I was also far less comfortable with SQL. So storing all my documents as JSON and writing all my queries as JSON just made sense. So I thought Mongo would be a simpler solution for me. I double checked that Mongo is indeed a fairly popular and mature project and then I got started.

Mongo Problems

Choosing Mongo might be my biggest technical regret of any software project. I read many guides. I tried and measured many configuration changes. My Python-Flask code did backflips for Mongo. Over the years I saw:

- frequent connection errors (ServerSelectionTimeoutError, OperationFailure, AutoReconnect)

- erratic RAM usage far exceeding what my traffic should require.

- out-of-memory kill events from my OS despite plenty of memory remaining. Mongo would take all the memory it could as a buffer (that's fine). My understanding is that it was supposed to return some to the OS as necessary, but instead it would just get killed sometimes.

- upgrading my cloud instance helped memory but not completely, and has a cost which should not be required given my meager traffic.

- sometimes the service would refuse to restart (systemctl restart/start)

- janky upgrade procedures (after apt dist-upgrade)

- janky migration procedures for a new storage engine (WiredTiger)

- no official support for the latest Ubuntu LTS months after release.

- unclear documentation on mongo.com about queries.

- bad pymongo documentation.

I encountered many people who had similar issues.

Finally, writing inserts and updates as JSON is convoluted. What should be a fairly simple operation like: "atomic increment this nested value, or insert if value is not there" was simply a disaster. For example, increment "api" by one, or insert "api":1 if necessary:

{"turtle":123,

"visit_count":{"web":2,

"api":8}}

{"turtle":123,

"visit_count":{"web":3}}

I got this to work in more than one way, but these were the kinds of solutions where you exclaim "I cannot believe this is the best way to do this". My multi-query solutions to this were the hardest to understand part of all ChessCraft web services. I bet a Mongo expert could have done better but... the real problem here is how easy this should be, compared to how hard it turned out to be.

PostgreSQL

I knew it was time to migrate when my non-programmer wife was becoming familiar with Mongo shortcomings. Thanks for listening, Alex.

PostgreSQL was an easy choice. I nearly picked it in 2019, it's FOSS, mature, and very popular. I don't need its fancy features but it's nice that they exist. Due to good planning, my Python class DatabaseManager separates my business logic from the database specifics. So I just had to replace Mongo in all those functions with PostgreSQL. I also have unit tests, functional tests, end-to-end tests, performance tests, and smoke tests. So when all my automated tests passed I knew it was a big milestone.

Later, I ran those tests and manual tests on a full production copy - with Mongo data migrated to PostgreSQL.

One thing that is a bit shocking is that I wrote Mongo-like JSON functionality on top of PostgreSQL and as far as I can tell it runs much faster. For example, one of my Python functions returns SQL results as a list of dictionaries based on the column names of the table:

SELECT a, b, c FROM table1 ;

[{"a":2,"b":3,"c":4},

{"a":11,"b":44,"c":66}]

I guess I just didn't need the flexibility of unstructured JSON documents and NoSQL for my structured JSON documents.

Migration Planning

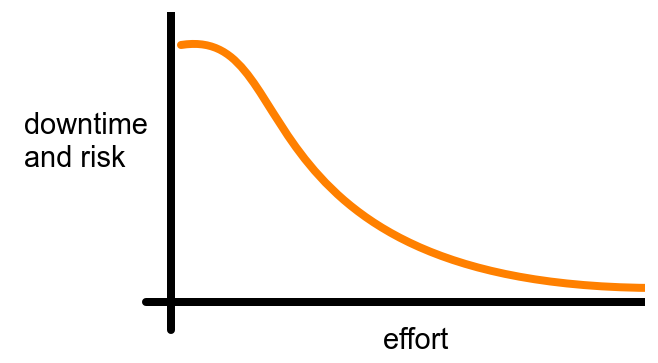

ChessCraft only gets a few hundred board uploads a day, and just several online games an hour. I wanted to minimize disruption, but not go overboard:

There's always risk, but eventually you get diminishing returns and hit an asymptote. Still, I wrote out an 18 step plan with an emergency revert plan and asked ChatGPT for feedback. By the way, here's my generic starter prompt for all my ChatGPT IT questions which I have bookmarked:

for the rest of this conversation, if i ask you a technical question:

1. do not repeat what i asked in your first sentence, just get to it.

2. do not write code comments, unless i am asking for clarification or if i seem confused

3. if i ask for code, do not say "here's the code" or "here's an example", just get to it.

4. if i don't specify a language or technology, assume i'm asking about python, linux, flask, postgresql, digital ocean, or csharp

5. avoid writing overly formal explanations, instead write as if you are an expert friendly colleague who is writing casually but still accurately

6. lean towards FOSS and avoid closed platforms and proprietary software if possible

7. do not apologize if you think you misunderstood me or if you think you got something wrong. just say what is wrong or adjusted. just get to it.

8. answer for an expert programmer, not a newbie programmer

if you understand, respond with "Y"

My plan was written casually just for myself, and with hardly any detail. Nevertheless, ChatGPT delivered some great advice. Incredible. Based on that, I re-ordered one step and added another.

Execution

I send out an announcement in game 24 hours in advance, which was a first. Some superfans organize tournaments now, so I thought it was prudent. I started at 7pm on May 23 and was done about 30 minutes later. All services good. I surprised myself with how well things turned out.

I have since purged "mongo" from my whole project, including my one-shot migration code, though it will live on forever in the git repo.

Post-Mortem

What could have been done better?

- Ironically, the one problem I had during the migration was that chesscraft.ca showed an ugly HTTP error page instead of my simple "the wizard is conjuring, come back later" maintenance override. That feature broke.

- I could have written more sophisticated migration code. For example instead of replacing Mongo immediately, I could have written all new live data to both databases. That way, all data migration could be done before the maintenance window.

I think I hit a perfect balance. Why spend several more hours writing one-shot code, just so 30 user uploads and 5 online games are spared?

I wanted this to be as low effort and painless for me as possible and I nailed it.

So long and thanks for all the JSON

Mongo delivered some of my most exciting updates for ChessCraft like uploading user designs, online multiplayer, and the most popular design pages. I'm grateful that it helped me do that. But I won't be using it again by choice.

Goodbye, Mongo.