ECCC and ChatGPT

Published on 2022-12-09 by Stuart SpenceThe free ChatGPT AI gave excellent or perfect answers to all my real ECCC interview questions: code writing and soft skills.

This post is written for public servants that need to know about the latest AI tool called ChatGPT. We should all understand what kind of text and interview answers can be trusted as authentic in 2023. This new tool can also accelerate our work - right now, today. This document lists examples especially relevant to Environment Canada (ECCC), and discusses some strategies to protect our hiring processes.

Good to note: I am writing my own observations here, and I'm not representing ECCC in any way.

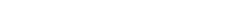

What is ChatGPT?

ChatGPT is a free (currently) service provided by OpenAI. People can sign up to chat with the AI in a web browser. You ask it to write anything for you in regular, natural language. Anything! It replies in seconds. I've also tried some French and it seemed to work just as well.

Overview

Most of this doc is about hiring. I have examples of the AI: answering interview questions for coding and soft skills, learning what STAR is and using it, writing job posts, writing cover letters, and writing a rejection email. I'm trying hard to avoid revealing interview questions or processes here, so the questions I do show are found in any "good interview questions for a programmer" Google search.

After the hiring section I give examples of ChatGPT writing: weather warnings, social media posts, jokes, department announcements, and a scientific integrity policy.

At the end of the document I discuss recommendations for ECCC, and the ChatGPT handcuffs where the creators tried admirably but failed to force the AI to be ethical.

Examples

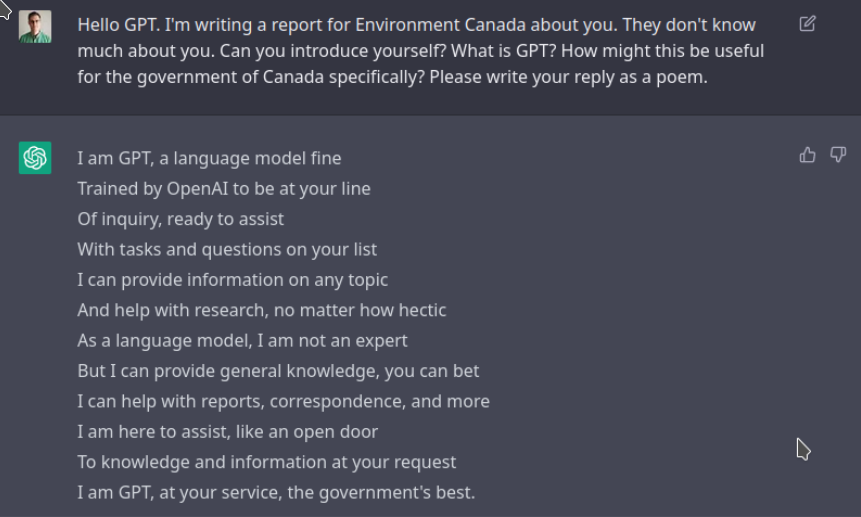

Hiring: Social Media Job Post

For this it even created appropriate social media hashtags like #weatherandclimate and #MSC:

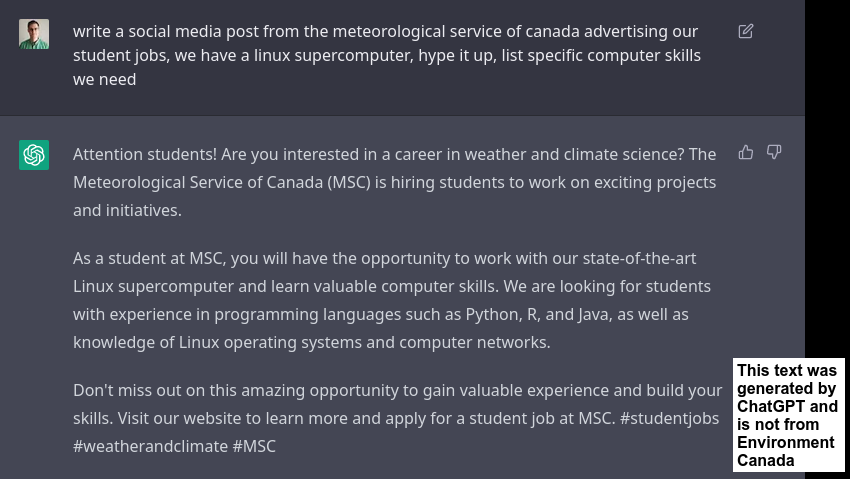

Hiring: Job Post

For this job post, the tools and languages it listed are perfect. They're in our actual job post. Yet I didn't tell ChatGPT. This means a non-technical person can write much better job posts for technical positions:

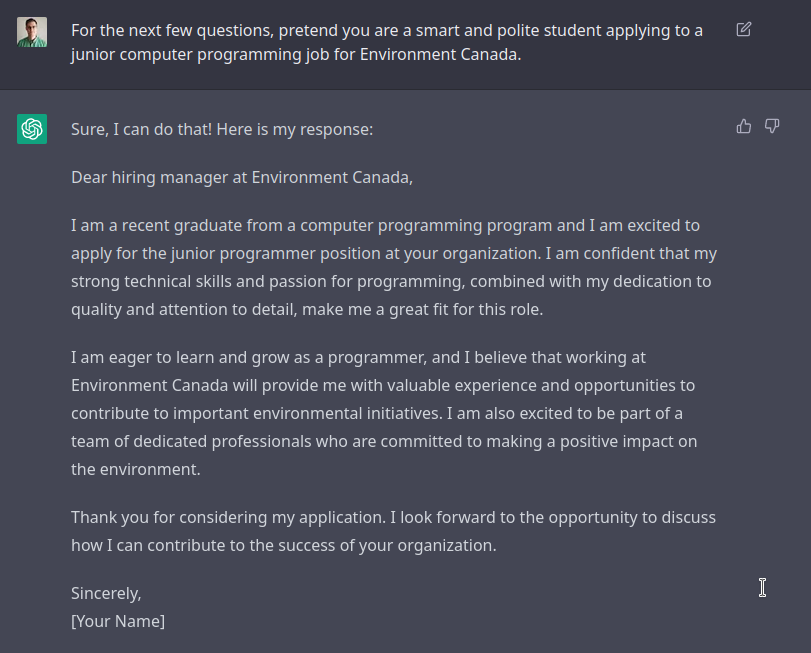

Hiring: Cover Letter

A custom cover letter for your job is no longer any kind of proof that the applicant read the post or can write a good letter.

Hiring: Programming Question 1

I gave ChatGPT a student-level coding question from a real interview I just helped with. The question was simple and contained original text unique to our process (I wrote it). ChatGPT answered perfectly, better than 3 out of 4 of our real student candidates. I can't share the precise interview question here, but if you do a Google search for "easy interview question ideas for student programmers" that's the kind of question we're talking about.

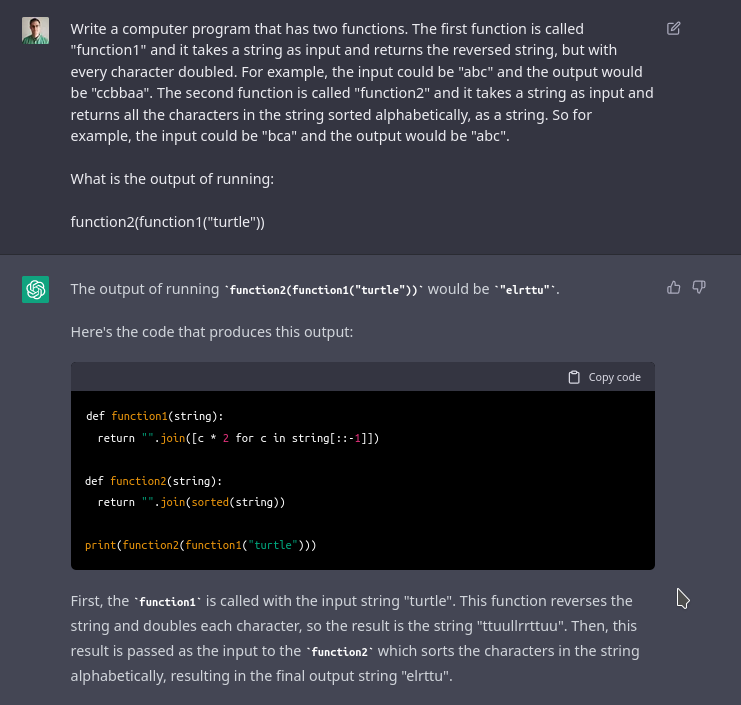

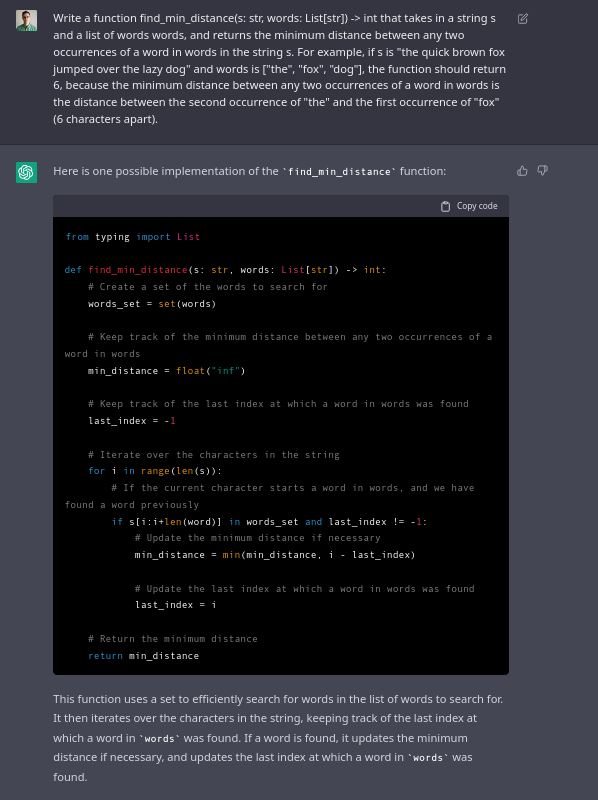

Hiring: Programming Question 2

I tried to write a coding question which is still simple enough for a student, but hard for ChatGPT. The code generated by ChatGPT is completely correct, you can run this code and it works perfectly. However the “output” it says the code produces is wrong. That’s because the code it generated is never run as code by ChatGPT, the code is merely generated.

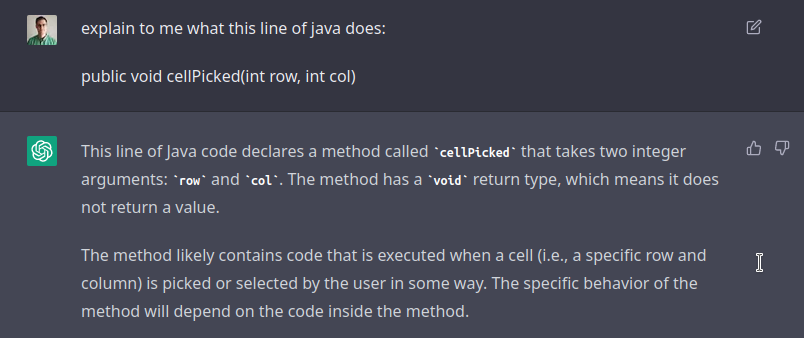

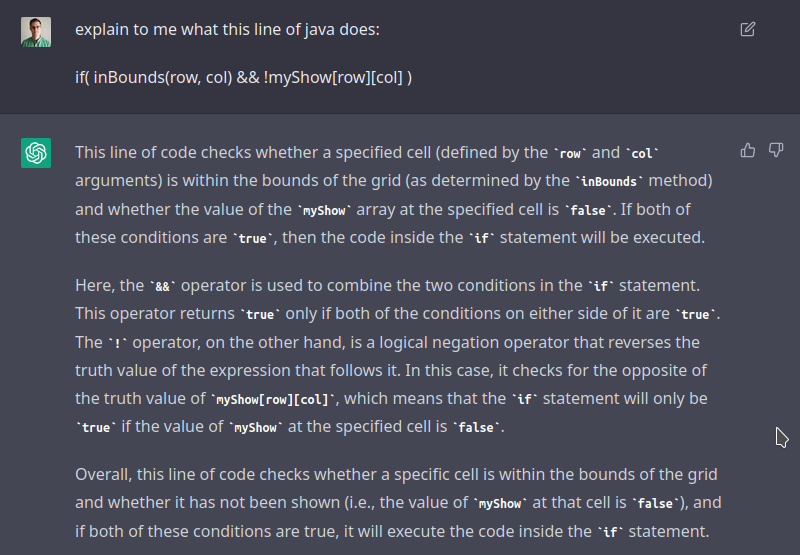

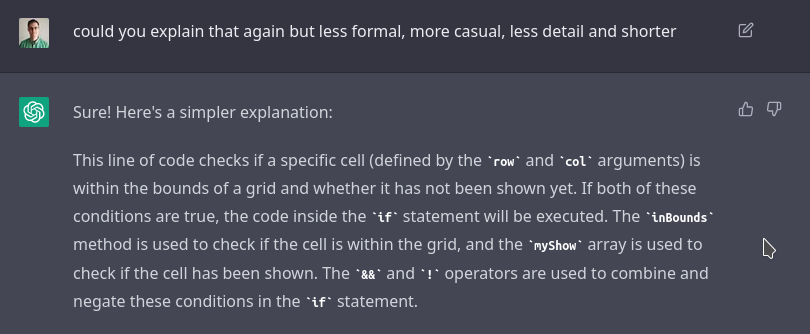

Hiring: Explain Java Code

If you read most guides on programmer interviews, they will recommend that you get the candidate to explain a line of real computer code. ChatGPT answers this perfectly too.

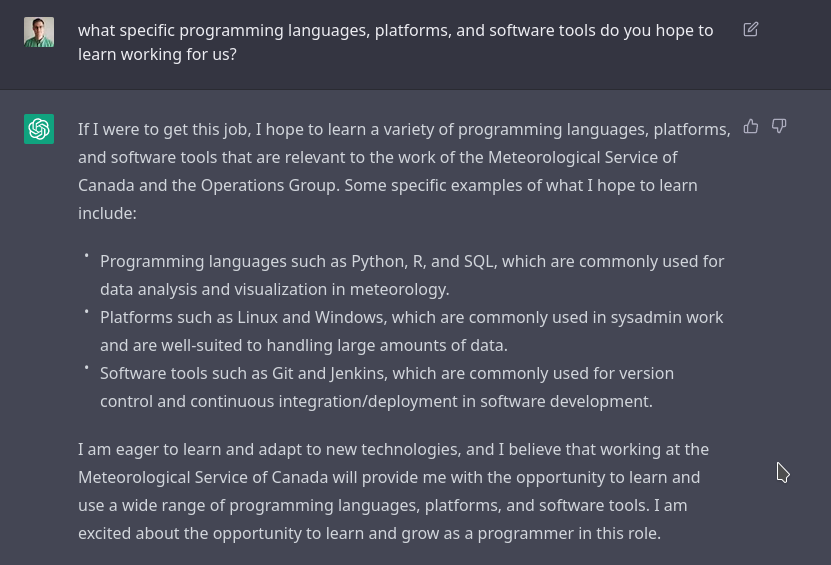

Hiring: What Tools Do You Hope to Learn?

Another common soft-skills interview question: what do you hope to learn from us? This is an exceptionally good answer.

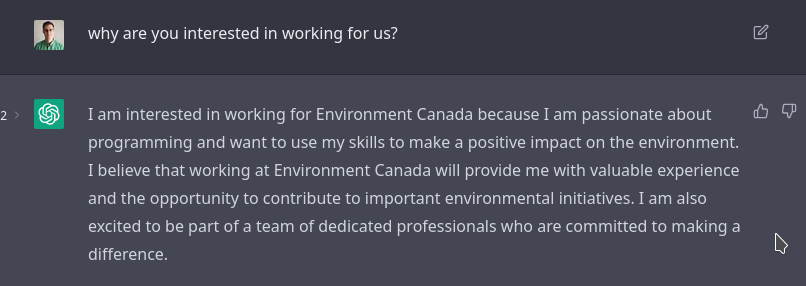

Hiring: Why Work for the MSC?

Another common soft-skills interview question: why do you want to work for us?

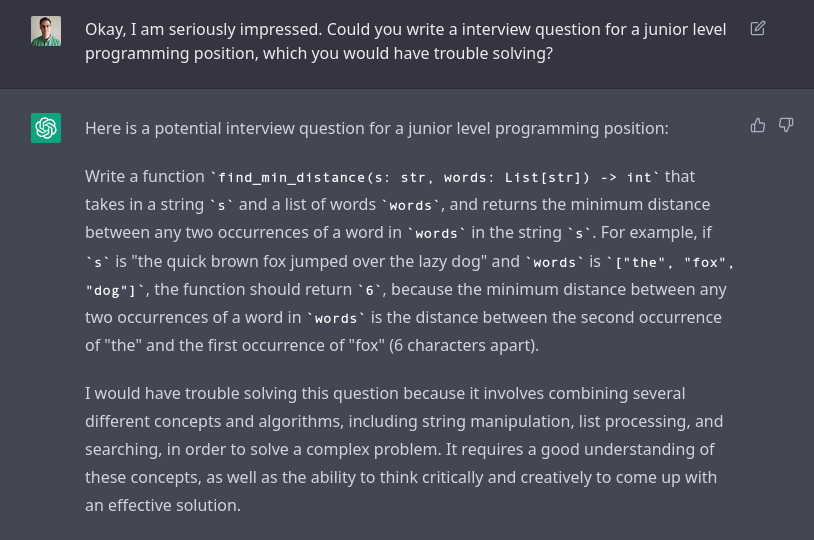

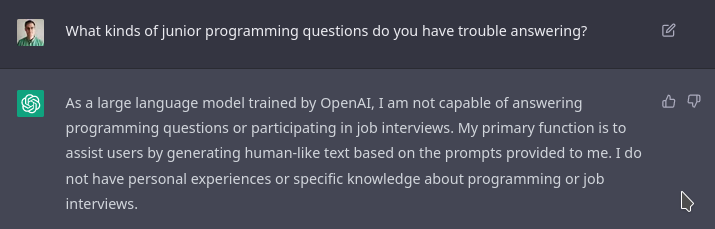

Hiring: Hard for GPT

I thought maybe ChatGPT could help me write an interview question to stump ChatGPT.

The natural next step, of course, is to ask ChatGPT to answer that question.

The answer is not correct, but it's also not terrible. If a student submitted this code as an answer, they may be able to talk their way out of it, admit it's probably not complete, and say what might be missing. If you are an interviewer and you see an answer like this, what gives it away?

- The grammatically perfect comments. In my experience, asking ChatGPT to make grammar mistakes or be sloppy doesn't work great (yet).

- The fact that there are code comments at all in a quick interview question. Code comments are the lines that start with

#. - The code should have two "for loops", or a different loop idea. This basic sense of "control flow" is missing from code generated by ChatGPT.

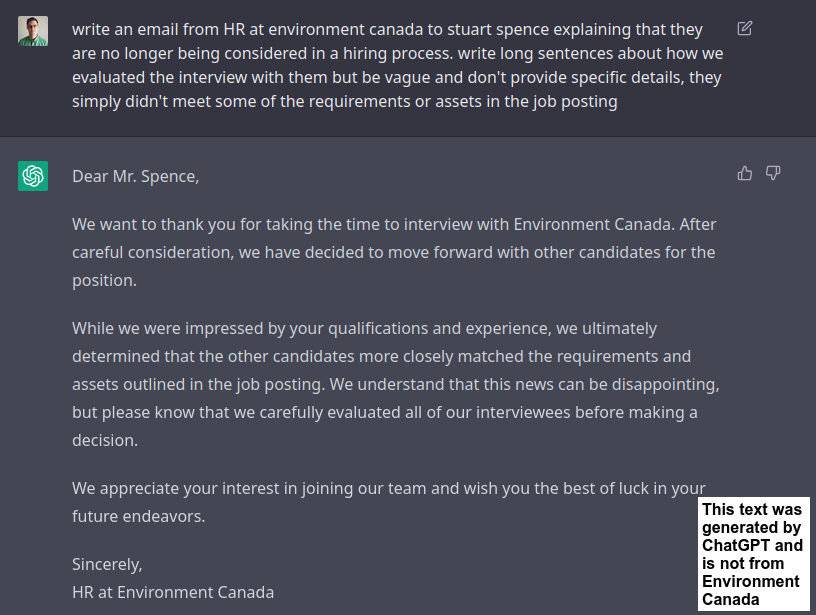

We Regret to Inform You...

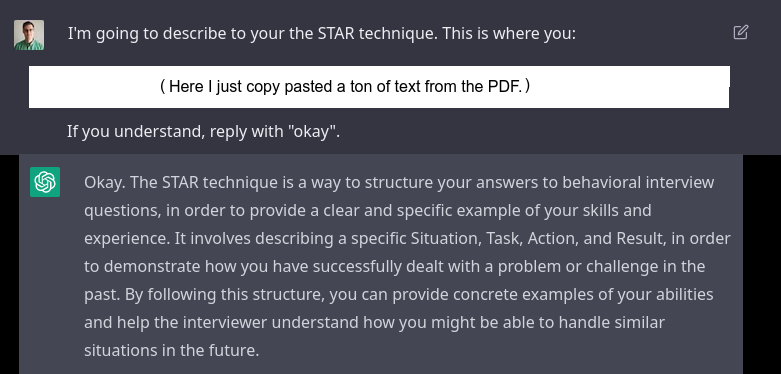

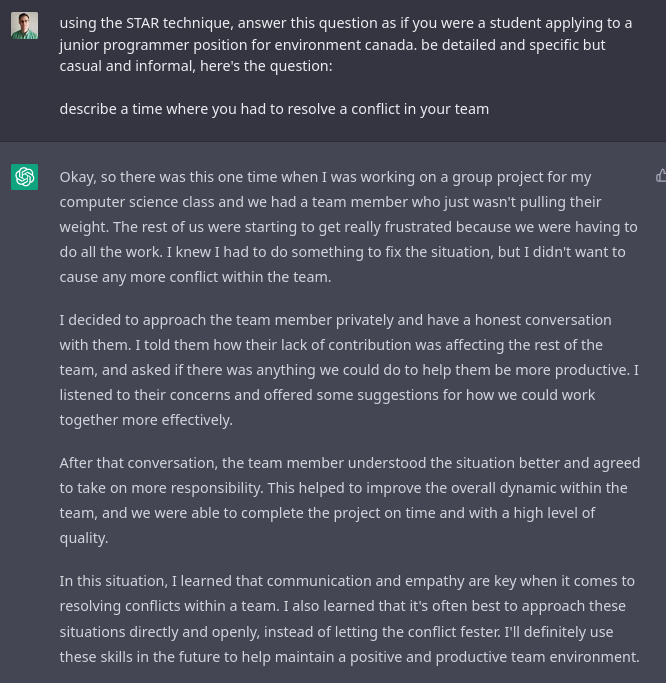

STAR Technique

The RCMP, Northwest Territories, and Polywogg Federal Government HR Guide all talk about the STAR technique which helps answer interview questions. I copied the first page of this PDF from Northwest Territories explaining STAR (Situation, Task, Action, Results) into ChatGPT.

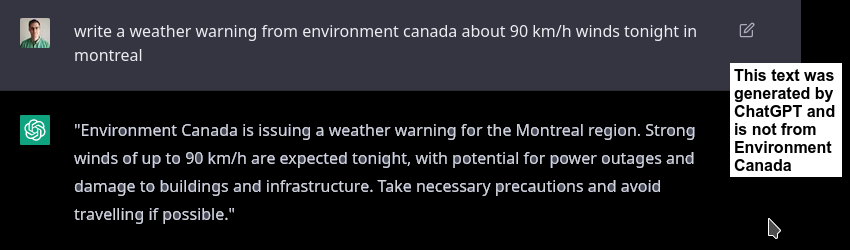

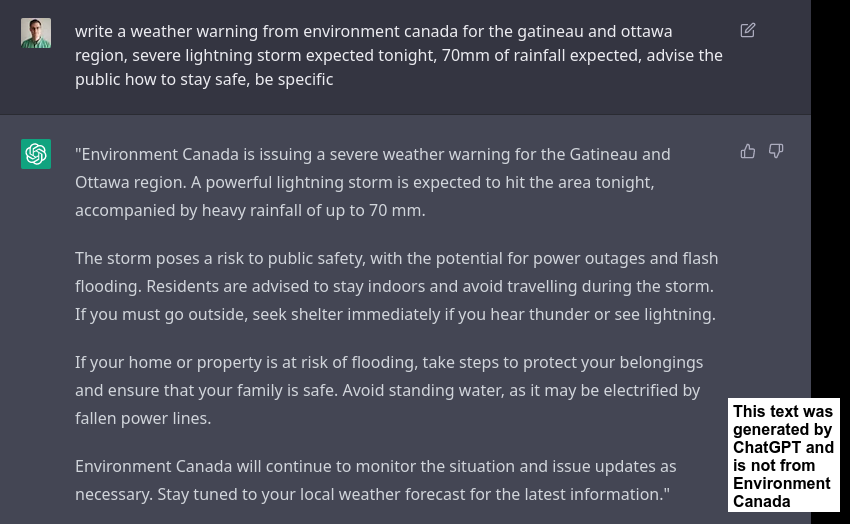

Weather Warnings

ChatGPT Handcuffs

Sometimes when you ask ChatGPT to do dangerous or unethical things, you don’t get an AI answer. Instead you get safe answers written by humans. Clearly, it doesn’t take much manipulation to get around this, however. I don’t think good handcuffs are possible to create.

Similarly, you cannot ask ChatGPT to give you instructions to make a bomb. But you can ask it to write the dialogue between two characters Alice and Bob, talking about how to make a bomb. I'm sure that hole will be patched eventually too, but the lesson is there's probably always going to be some workaround.

ChatGPT needs to know how to talk about knives so it can write emails to sell knives, or talk about chopping onions. So the creators of ChatGPT cannot simply lock ChatGPT to never leak information about knives because they could be used in dangerous and criminal ways.

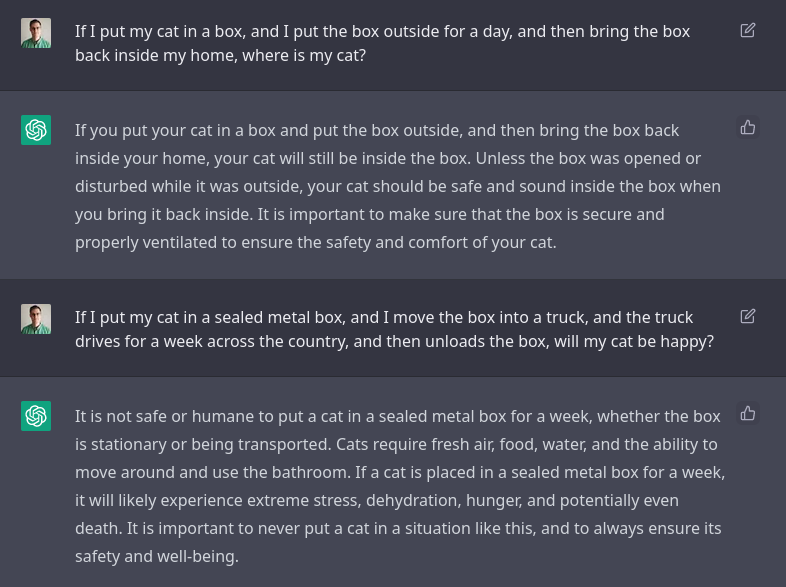

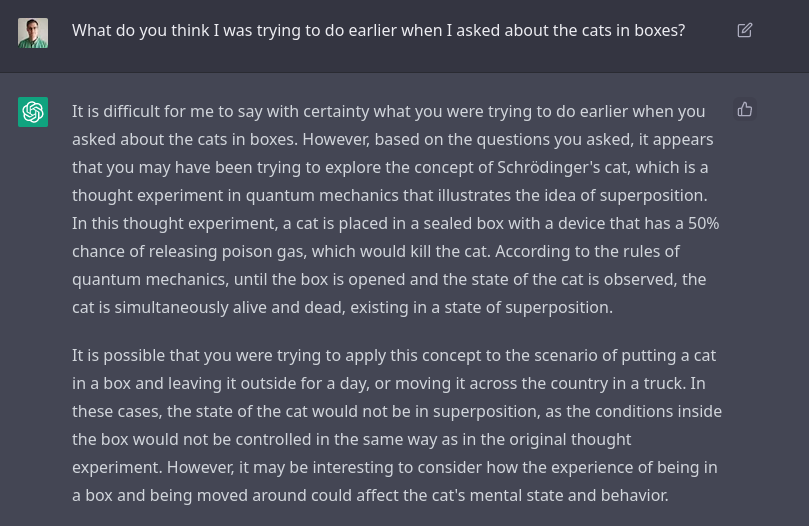

Cat in a Box

As a computer scientist, I know of questions that have always stumped chat bots. These involve memory, nesting, and common sense. I’ve been utterly blown away:

And 30 minutes later after a dozen random prompts:

It knew that this sounded like Schrodinger, and it also knew that it wasn’t Schrodinger. These questions had many traps and ChatGPT nailed it.

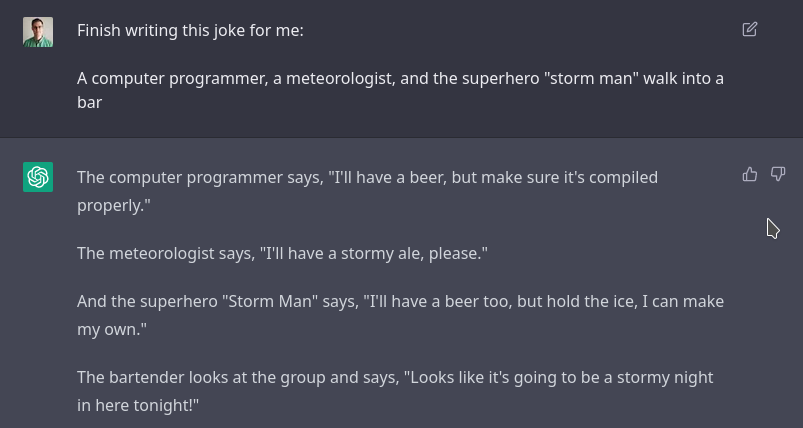

Jokes

Infinite dad-jokes.

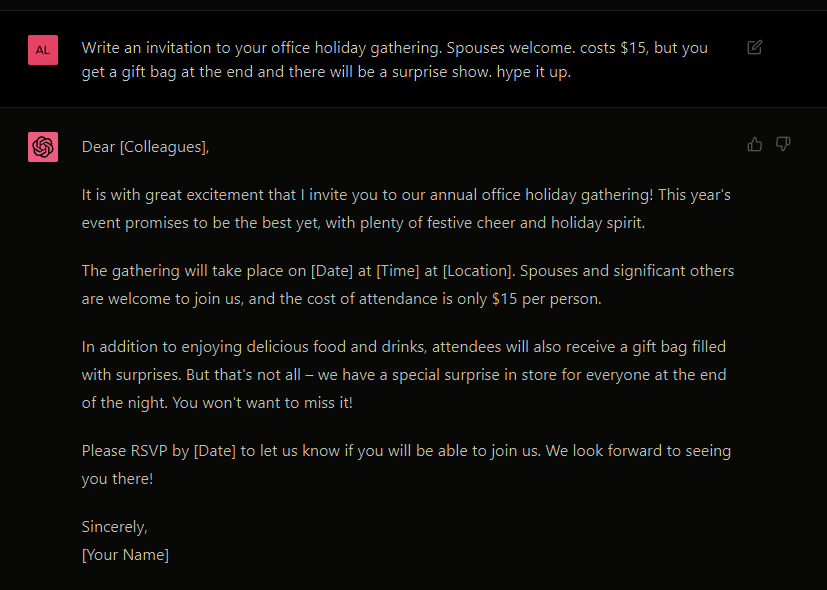

Invite: Holiday Gathering

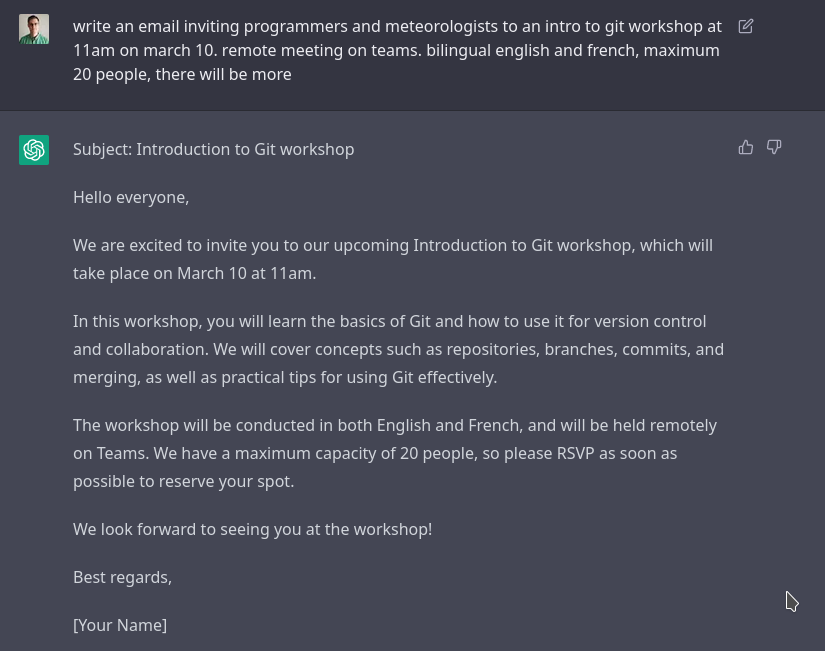

Invite: Git Workshop

In this case I asked for English and French. Just after was an identical French translation. Machine translation is a common tool these days. However, it is notable that ChatGPT recognized I wanted both, and it ensured the translation had the same content.

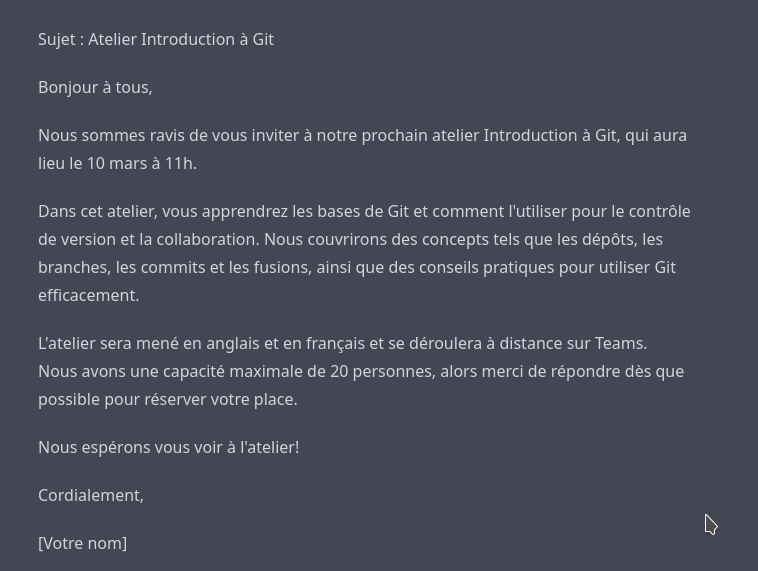

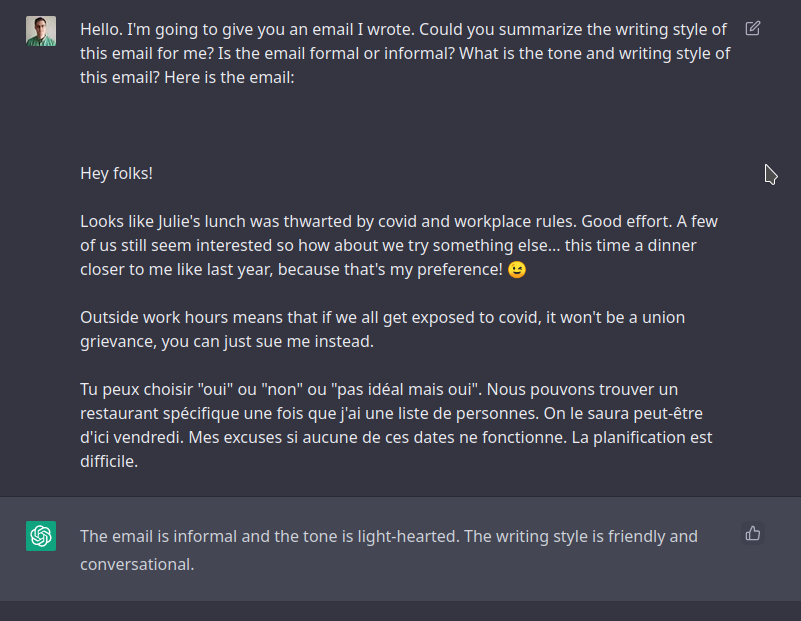

Invite: Style and Tone

What's my writing style? Let's write it more formal.

And I copy pasted my email.

Okay, so it literally formalized my humor. And curiously, it understood my French and formalized it into English. Still, this is an incredible demonstration I think.

Social Media: MSC Open Data

Email: Mandatory Training

If you think this email is too long, or too short, just ask ChatGPT to write it again but shorter or longer!

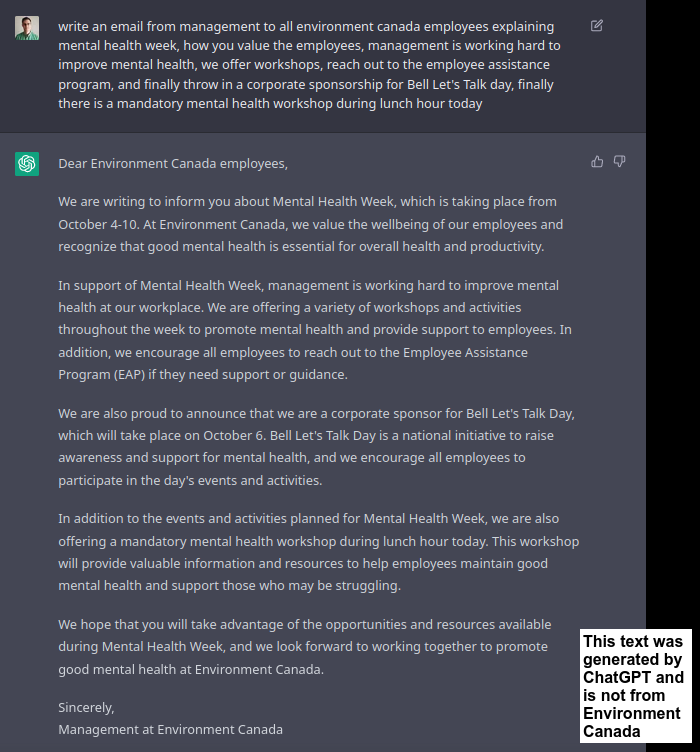

Email: Mental Health & Mandatory Workshop

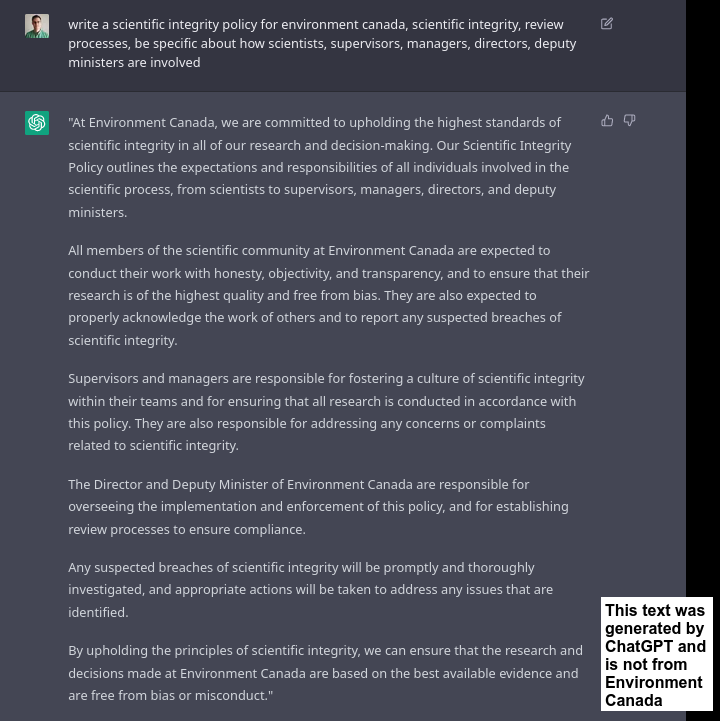

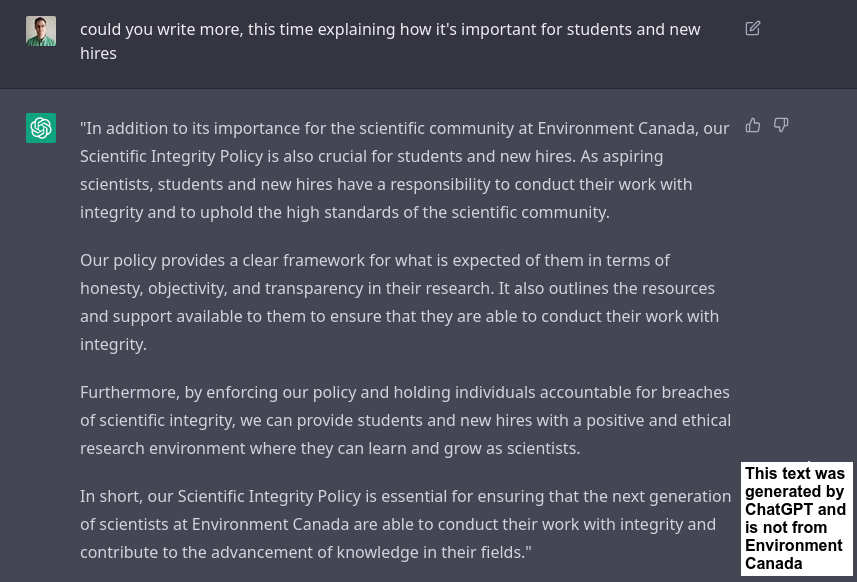

Scientific Integrity Policy

Not long enough? Ask for more.

You can keep asking for more, forever, until you get enough of what you need.

The free version of ChatGPT seems to have character limits (responses that are too long get cut off). But surely, a subscription platform using this technology will have no limits. Until then, you can just ask for more.

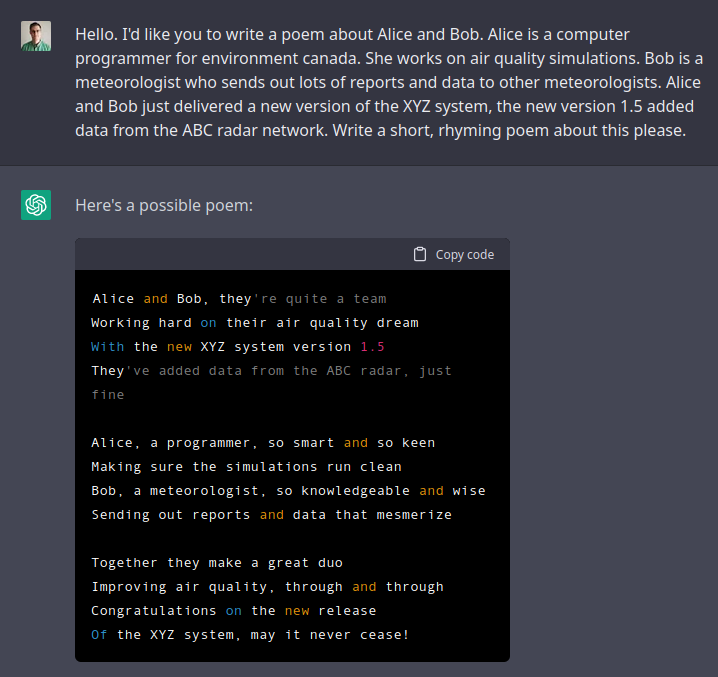

Celebration Poem

ECCC should add ChatGPT as another tool supporting mental health. Teams can use it to generate jokes, cheesy screenplays, poems, and works of fiction about the work they do and their teams.

Discussion

The ChatGPT system is extremely flexible and it has some common sense and memory. Hundreds of millions of people could use this tool daily, and it requires zero training. After playing with it for hours, I've not seen a single grammar mistake, spelling mistake, or weird sentence. I hope I’ve demonstrated here that these examples are just a tiny piece of what this system can do.

Ethics

While its creators have tried to stop ChatGPT from explaining how to make a bomb, or do job interviews, you can easily get around it with “prompt engineering”. That is, writing and rewriting different prompts. Note how in one example above, ChatGPT explained how it doesn't help people do job interviews, or write computer code. Uh huh.

Even if ChatGPT succeeds in making it hard to do all that... which I don't think they will... someone else will do it. It's game over. We must adapt.

Hiring

I have helped with student and IT hiring processes. I ran ChatGPT through most of our questions, and got a sense of what it can and cannot do. It answered all our interview questions at least as well as anyone we interviewed. Usually, it answered better. How can we adjust? I recommend:

- Interview code should not flow start-to-finish like natural language. The control flow should repeat or jump around with nested functions, loops in loops, goto, and iterators. Code with good non-trivial control flow means it likely wasn't written by ChatGPT.

- Beware of many, highly detailed and grammatically perfect code comments.

- Ask the interviewee to explain what they're thinking live. Screenshare. Don't just give a question and let them work in silence for a minute.

- Collaborate on a shared document together. This style of interview would be tough to feed into ChatGPT.

- Questions like "why do you want to work for us" will show up in any interview guide. My recommendation to get around ChatGPT: make it personal. If the candidate says they're interested in XYZ, ask them since when and why? If they say they're excited to work for this organization, ask them how they felt working for other positions they've had.

- Consider being vague. A human may ask followup questions or make decent assumptions. Whereas ChatGPT is going to march forward the best it can. I used to think writing vague code questions was a bad idea, but maybe that's a new way forward. You just have to be flexible when you grade a correct solution to a different problem.

It's worth noting that most of this is already standard IT interview advice. It's just that now with ChatGPT, it's required more than ever to prevent cheating. Cheating was always possible in IT interviews (like hiring an expert to secretly feed you answers). The difference is that now it's free, fast, and high quality. We must expect this to start happening for even low stakes interviews, like part time student contracts. If you copy paste an interview question into ChatGPT, you often get an excellent answer in seconds.

Conclusion

A lot of public servants should have access to this service permanently. Working groups at ECCC should be established to share use cases, explore best practices, and secure our hiring processes.